Articles, Blogging, Humor, Journals, Literature, Paper, Random thoughts

Tag: Articles

18 Posts

AIM, Articles, Computational Chemistry, FMO, Photochemistry, Photosysnthesis, Publications, TD-DFT

Mg²⁺ Needs a 5th Coordination in Chlorophylls – New paper in IJQC

Articles, Blogging, Random thoughts, Writing

I’m done with Computational Studies

ACS, Articles, Computational Chemistry, FMO, Photochemistry, Photosysnthesis, Proteins

The Evolution of Photosynthesis

Articles, Blogging, Humor, Random thoughts, Research

The Gossip Approach to Scientific Writing

Articles, Blogging, Computational Chemistry, Internet, Literature, Publications, Theoretical Chemistry

I’m putting a new blog out there

Articles, Computational Chemistry, DNA, Molecular Dynamics, Paper, PCCP, RSC

Unnatural DNA and Synthetic Biology

Alumni, Articles, Chemistry, Computational Chemistry, Paper, Theoretical Chemistry

New paper in Tetrahedron #CompChem “Why U don’t React?”

![New Paper in JIPH – As(V)@calix[n]arenes](https://i0.wp.com/joaquinbarroso.com/wp-content/uploads/2016/04/fig3.jpg?resize=400%2C200&ssl=1)

Articles, calixarenes, Paper, Research, Uncategorized

New Paper in JIPH – As(V)@calix[n]arenes

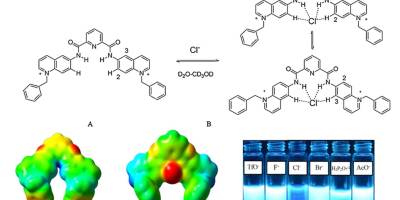

Chemosensors, Computational Chemistry, Paper, Sensors